Fairness in Machine Learning

by Oliver Thomas and Thomas Kehrenberg

{ot44,t.kehrenberg}@sussex.ac.uk - Predictive Analytics Lab (PAL), University of Sussex, UK

Fairness based on similarity

- First define a distance metric on your datapoints (i.e. how similar are the datapoints)

- Can be just Euclidean distance but is usually something else (because of different scales)

- This is the hardest step and requires domain knowledge

Pre-processing based on similarity

- An individual is then considered to be unfairly treated if it is treated differently than its "neighbours".

- For any data point we can check how many of the $k$ nearest neighbours have the same class label as that data point

- If the percentage is under a certain threshold then there was discrimination against the individual corresponding to that data point.

- Then: flip the class labels of those data points where the class label is considered unfair

Considering similarity during training

Alternative idea:

- a classifier is fair if and only if the predictive distributions for any two data points are at least as similar

as the two points themselves

- (according to a given similarity measure for distributions and a given similarity measure for data points)

Considering similarity during training

- Needed similarity measure for distributions

- Then add fairness condition as additional loss term to optimization loss

Which fairness criteria should we use?

We've seen several statistical definitions and Individual Fairness

There's no right answer, all of the previous examples are "fair". It's important to consult domain experts to find which is the best fit for each problem.

There is no one-size fits all.

Delayed Impact of Fair Learning

In the real world there are implications.

An individual doesn't just cease to exist after we've made our loan or bail decision.

The decision we make has consequences.

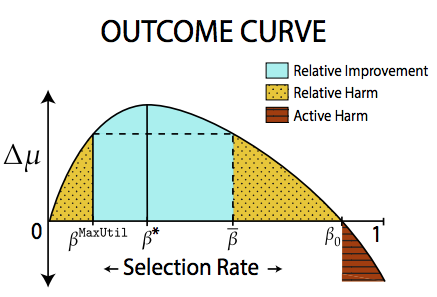

The Outcome Curve

Possible areas

| Area | Description |

|---|---|

| Active Harm | Expected change in credit score of an individual is negative |

| Stagnation | Expected change in credit score of an individual is 0 |

| Improvement | Expected change in credit score of an individual is positive |

Possible areas

| Area | Description |

|---|---|

| Relative Harm | Expected change in credit score of an individual is less than if the selection policy had been to maximize profit |

| Relative Improvement | Expected change in credit score of an individual is better than if the selection policy had been to maximize profit |

Removing a sensitive feature

"If we just remove the sensitive feature, then our model can't be unfair"

This doesn't work, why?

Removing a sensitive feature

Because ML methods are excellent at finding patterns in data

Pedreschi et al.

Reweighing

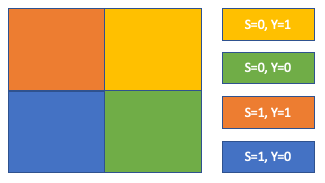

Kamiran & Calders determined that one source of unfairness was imbalanced training data.

Simply count the current distribution of demographics

Reweighing

Then either up/down-sample or assign instance weights to members of each group in the training set so that the results are "normalised".

Problems with doing this?

Any Ideas?

Problems with doing this?

What does this representation mean?

The learned representation is uninterpretable by default. Recently Quadrianto et al constrained the representation to be in the same same as the input so that we could look at what changed

Problems with doing this?

What if the vendor data user decides to be fair as well?

Referred to as "fair pipelines". Work has only just begun exploring these. Current research shows that these don't work (at the moment!)

Variational Fair Autoencoder

idea: Let's "disentangle" the sensitive attribute using the variational autoencoder framework!

Variational Fair Autoencoder

Louizos et al. 2017

Variational Fair Autoencoder

Where $z_1$ and $z_2$ are encouraged to confirm to a prior distribution

Problems with doing this?

Similar to adversarial model, but more principled.

How to enforce fairness?

During Training

Instead of building a fair representation, we just make the fairness constraints part of the objective during training of the model. An early example of this is by Zafar et al.How to enforce fairness?

During Training

Given we have a loss function, $\mathcal{L}(\theta)$.

In an unconstrained classifier, we would expect to see

$$ \min{\mathcal{L}(\theta)} $$How to enforce fairness?

During Training

To reduce Disparate Impact, Zafar adds a constraint to the loss function.

$$ \begin{aligned} \text{min } & \mathcal{L}(\theta) \\ \text{subject to } & P(\hat{y} \neq y|s = 0) − P(\hat{y} \neq y|s = 1) \leq \epsilon \\ \text{subject to } & P(\hat{y} \neq y|s = 0) − P(\hat{y} \neq y|s = 1) \geq -\epsilon \end{aligned} $$Transparency and Fairness

Fairness without constraints

- Most fairness methods for "during training" are formulated as constraints

- This means either

- Using very special models where constrained-based optimization is possible

- Using Lagrange multipliers

Lagrange multipliers

Constrained optimization problem:

$$\min\limits_\theta\, f(\theta) \quad\text{s.th.}~ g(\theta) = 0$$

This gets reformulated as

$f(\theta) + \lambda g(\theta)$

where $\lambda$ has to be determined, but is usually just set to some value

Shortcomings of this

- The value of $\lambda$ has no real meaning and is usually just set to arbitrary values

- It's hard to tell whether the constraint is fulfilled

Alternate approach

- Introduce pseudo labels $\bar{y}$.

- Make sure $\bar{y}$ is fair ($\bar{y}\perp s$).

- Use $\bar{y}$ as prediction target.

$$P(y=1|x,s,\theta)=\sum\limits_{\bar{y}\in\{0,1\}} P(y=1|\bar{y},s)P(\bar{y}|x,\theta)$$

Alternate approach

For demographic parity:

$P(\bar{y}|s=0)=P(\bar{y}|s=1)$

This allows us to compute $P(y=1|\bar{y},s)$. Which is all we need.

Details: Tuning Fairness by Balancing Target Labels (Kehrenberg et al., 2020)

Multinomial sensitive attribute

The usual metrics for Demographic Parity are only defined for binary attributes: it's either a ratio or a difference of two probabilities.

Alternative:

Hirschfeld-Gebelein-Rényi Maximum Correlation Coefficient (HGR)

- defined on the domain [0,1]

- $HGR(Y,S) = 0$ iff $Y\perp S$

- $HGR(Y,S) = 1$ iff there is a deterministic function to map between them

Causality

Motivation:

- Fairness methods so far have only looked at correlations between the sensitive attribute and the prediction

- But isn't a causal path more important?

Causality

Example:

Admissions at Berkeley college.

Men were found to be accepted with much higher probability. Was it discrimination?

Causality

Was it discrimination?

Not necessarily! The reason was that women were applying to more competitive departments.

Departments like medicine and law are very competitive (hard to get in).

Departments like computer science are much less competetive (because it's boring ;).

Causality

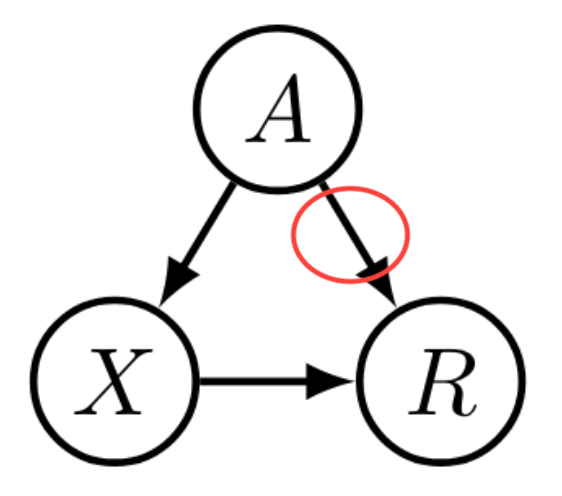

- $R$: admission decision

- $A$: gender

- $X$: department choice

Causality

If we can understand what causes unfair behavior, then we can take steps to mitigate it.

Basic idea: a sensitive attribute may only affect the prediction via legitimate causal paths.

But how do we model causation?

Causal Graphs

Solution: build causal graphs of your problem

Problem: causality cannot be inferred from observational data

- Observational data can only show correlations

- For causal information we have to do experiments. (But that is often not ethical.)

- Currently: just guess the causal structure

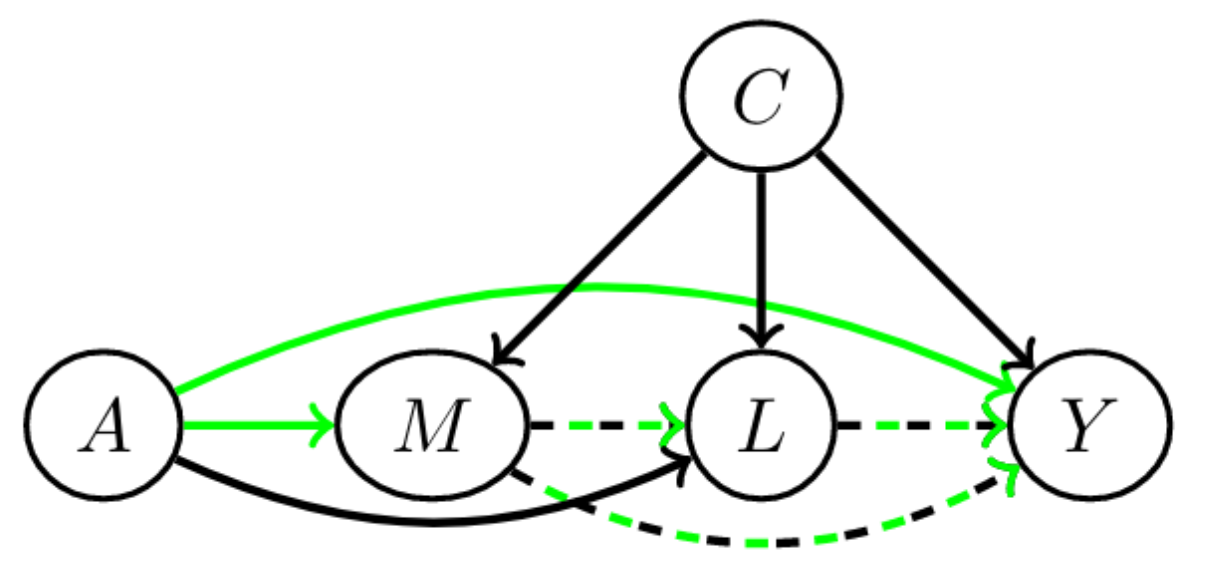

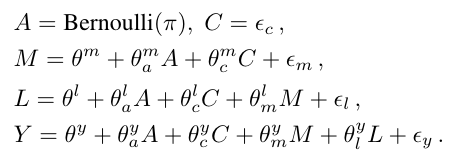

Path-specific causal fairness

$A$ is a sensitive attribute, and its direct effect on $Y$ and effect through $M$ is considered unfair. But $L$ is considered admissible.

Path-specific causal fairness

With a structural causal model (SCM), like this:

You can figure out exactly how each feature should be incorporated in order to make a fair prediction.

Path-specific causal fairness

- Advantage: we can figure out exactly how to avoid unfairness

- Problem: requires an SCM, which we usually don't have and which is hard to get

- Problem: we have to decide for each feature individually whether it's admissible

- This will not work for computer vision tasks where the raw features are pixels

Counterfactual Fairness

- slightly different idea but also based on SCMs (structural causal model)

- we're basically asking: in the counterfactual world where your gender was different, would you have been accepted?

- counterfactual: something that has not happened or is not the case

Counterfactuals

Example of a counterfactual statement:

If Oswald had not shot Kennedy, no-one else would have.

This is counterfactual, because Oswald did in fact shoot Kennedy.

It's a claim about a counterfactual world.

Counterfactual Fairness

$U$: set of all unobserved background variables

$P(\hat{y}_{s=i}(U) = 1|x, s=i)=P(\hat{y}_{s=j}(U) = 1|x, s=i)$

$i, j \in \{0, 1\}$

$\hat{y}_{s=k}$: prediction in the counterfactual world where $s=k$

practical consequence: $\hat{y}$ is counterfactually fair if it does not causally depend (as defined by the causal model) on $s$ or any descendants of $s$.

The problem of getting the SCM

- Causal models cannot be learned from observational data only

- You can try, but there is no unique solution

- Different causal models can lead to very different results

Example with Causal Graphs

Example: Law school success

Task: given GPA score and LSAT (law school entry exam), predict grades after one year in law school: FYA (first year average)

Additionally two sensitive attributes: race and gender

Example with Causal Graphs

Two possible graphs

Causality — Summary

- Causal models are very promising and arguably the "right" way to solve the problem.

- However these models are difficult to obtain.